[ad_1]

An outlier detector methodology that helps categorical information and offers explanations for the outliers flagged

Outlier detection is a standard process in machine studying. Particularly, it’s a type of unsupervised machine studying: analyzing information the place there are not any labels. It’s the act of discovering objects in a dataset which can be uncommon relative to the others within the dataset.

There could be many causes to want to determine outliers in information. If the information being examined is accounting data and we’re inquisitive about discovering errors or fraud, there are often far too many transactions within the information to look at every manually, and it’s needed to pick a small, manageable variety of transactions to research. An excellent place to begin could be to search out probably the most uncommon data and look at these; that is with the thought the errors and fraud ought to each be uncommon sufficient to face out as outliers.

That’s, not all outliers shall be attention-grabbing, however errors and fraud will doubtless be outliers, so when searching for these, figuring out the outliers is usually a very sensible method.

Or, the information might include bank card transactions, sensor readings, climate measurements, organic information, or logs from web sites. In all circumstances, it may be helpful to determine the data suggesting errors or different issues, in addition to probably the most attention-grabbing data.

Usually as nicely, outlier detection is used as a part of enterprise or scientific discovery, to higher perceive the information and the processes being described within the information. With scientific information, for instance, we’re usually inquisitive about discovering probably the most uncommon data, as these stands out as the most scientifically attention-grabbing.

The necessity for interpretability in outlier detection

With classification and regression issues, it’s usually preferable to make use of interpretable fashions. This may end up in decrease accuracy (with tabular information, the best accuracy is often discovered with boosted fashions, that are fairly uninterpretable), however can also be safer: we all know how the fashions will deal with unseen information. However, with classification and regression issues, it’s additionally widespread to not want to grasp why particular person predictions are made as they’re. As long as the fashions are moderately correct, it could be enough to simply allow them to make predictions.

With outlier detection, although, the necessity for interpretability is far increased. The place an outlier detector predicts a report may be very uncommon, if it’s not clear why this can be the case, we might not know methods to deal with the merchandise, or even when we must always imagine it’s anomalous.

Actually, in lots of conditions, performing outlier detection can have restricted worth if there isn’t understanding of why the objects flagged as outliers had been flagged. If we’re checking a dataset of bank card transactions and an outlier detection routine identifies a sequence of purchases that seem like extremely uncommon, and subsequently suspicious, we will solely examine these successfully if we all know what’s uncommon about them. In some circumstances this can be apparent, or it could develop into clear after spending a while inspecting them, however it’s way more efficient and environment friendly if the character of the anomalies is obvious from when they’re found.

As with classification and regression, in circumstances the place interpretability is just not doable, it’s usually doable to attempt to perceive the predictions utilizing what are referred to as post-hoc (after-the-fact) explanations. These use XAI (Explainable AI) strategies equivalent to function importances, proxy fashions, ALE plots, and so forth. These are additionally very helpful and also will be coated in future articles. However, there may be additionally a really sturdy profit to having outcomes which can be clear within the first place.

On this article, we glance particularly at tabular information, although will have a look at different modalities in later articles. There are a selection of algorithms for outlier detection on tabular information generally used at this time, together with Isolation Forests, Native Outlier Issue (LOF), KNNs, One-Class SVMs, and fairly plenty of others. These usually work very nicely, however sadly most don’t present explanations for the outliers discovered.

Most outlier detection strategies are simple to grasp at an algorithm stage, however it’s nonetheless troublesome to find out why some data had been scored extremely by a detector and others weren’t. If we course of a dataset of economic transactions with, for instance, an Isolation Forest, we will see that are probably the most uncommon data, however could also be at a loss as to why, particularly if the desk has many options, if the outliers include uncommon mixtures of a number of options, or the outliers are circumstances the place no options are extremely uncommon, however a number of options are reasonably uncommon.

Frequent Patterns Outlier Issue (FPOF)

We’ve now gone over, at the very least rapidly, outlier detection and interpretability. The rest of this text is an excerpt from my e book Outlier Detection in Python (https://www.manning.com/books/outlier-detection-in-python), which covers FPOF particularly.

FPOF (FP-outlier: Frequent pattern based outlier detection) is one in all a small handful of detectors that may present some stage of interpretability for outlier detection and deserves for use in outlier detection greater than it’s.

It additionally has the interesting property of being designed to work with categorical, versus numeric, information. Most real-world tabular information is blended, containing each numeric and categorical columns. However, most detectors assume all columns are numeric, requiring all categorical columns to be numerically encoded (utilizing one-hot, ordinal, or one other encoding).

The place detectors, equivalent to FPOF, assume the information is categorical, we’ve got the other problem: all numeric options have to be binned to be in a categorical format. Both is workable, however the place the information is primarily categorical, it’s handy to have the ability to use detectors equivalent to FPOF.

And, there’s a profit when working with outlier detection to have at our disposal each some numeric detectors and a few categorical detectors. As there are, sadly, comparatively few categorical detectors, FPOF can also be helpful on this regard, even the place interpretability is just not needed.

The FPOF algorithm

FPOF works by figuring out what are referred to as Frequent Merchandise Units (FISs) in a desk. These are both values in a single function which can be quite common, or units of values spanning a number of columns that continuously seem collectively.

Nearly all tables include a major assortment of FISs. FISs primarily based on single values will happen as long as some values in a column are considerably extra widespread than others, which is sort of at all times the case. And FISs primarily based on a number of columns will happen as long as there are associations between the columns: sure values (or ranges of numeric values) are typically related to different values (or, once more, ranges of numeric values) in different columns.

FPOF is predicated on the concept, as long as a dataset has many frequent merchandise units (which just about all do), then most rows will include a number of frequent merchandise units and inlier (regular) data will include considerably extra frequent merchandise units than outlier rows. We will reap the benefits of this to determine outliers as rows that include a lot fewer, and far much less frequent, FISs than most rows.

Instance with real-world information

For a real-world instance of utilizing FPOF, we have a look at the SpeedDating set from OpenML (https://www.openml.org/search?type=data&sort=nr_of_likes&status=active&id=40536, licensed below CC BY 4.0 DEED).

Executing FPOF begins with mining the dataset for the FISs. Various libraries can be found in Python to help this. For this instance, we use mlxtend (https://rasbt.github.io/mlxtend/), a general-purpose library for machine studying. It offers a number of algorithms to determine frequent merchandise units; we use one right here referred to as apriori.

We first gather the information from OpenML. Usually we’d use all categorical and (binned) numeric options, however for simplicity right here, we are going to simply use solely a small variety of options.

As indicated, FPOF does require binning the numeric options. Often we’d merely use a small quantity (maybe 5 to twenty) equal-width bins for every numeric column. The pandas minimize() methodology is handy for this. This instance is even a little bit less complicated, as we simply work with categorical columns.

from mlxtend.frequent_patterns import apriori

import pandas as pd

from sklearn.datasets import fetch_openml

import warningswarnings.filterwarnings(motion='ignore', class=DeprecationWarning)

information = fetch_openml('SpeedDating', model=1, parser='auto')

data_df = pd.DataFrame(information.information, columns=information.feature_names)

data_df = data_df(('d_pref_o_attractive', 'd_pref_o_sincere',

'd_pref_o_intelligence', 'd_pref_o_funny',

'd_pref_o_ambitious', 'd_pref_o_shared_interests'))

data_df = pd.get_dummies(data_df)

for col_name in data_df.columns:

data_df(col_name) = data_df(col_name).map({0: False, 1: True})

frequent_itemsets = apriori(data_df, min_support=0.3, use_colnames=True)

data_df('FPOF_Score') = 0

for fis_idx in frequent_itemsets.index:

fis = frequent_itemsets.loc(fis_idx, 'itemsets')

help = frequent_itemsets.loc(fis_idx, 'help')

col_list = (listing(fis))

cond = True

for col_name in col_list:

cond = cond & (data_df(col_name))

data_df.loc(data_df(cond).index, 'FPOF_Score') += help

min_score = data_df('FPOF_Score').min()

max_score = data_df('FPOF_Score').max()

data_df('FPOF_Score') = ((max_score - x) / (max_score - min_score)

for x in data_df('FPOF_Score'))

The apriori algorithm requires all options to be one-hot encoded. For this, we use panda’s get_dummies() methodology.

We then name the apriori methodology to find out the frequent merchandise units. Doing this, we have to specify the minimal help, which is the minimal fraction of rows by which the FIS seems. We don’t need this to be too excessive, or the data, even the sturdy inliers, will include few FISs, making them laborious to tell apart from outliers. And we don’t need this too low, or the FISs might not be significant, and outliers might include as many FISs as inliers. With a low minimal help, apriori may additionally generate a really massive variety of FISs, making execution slower and interpretability decrease. On this instance, we use 0.3.

It’s additionally doable, and typically finished, to set restrictions on the dimensions of the FISs, requiring they relate to between some minimal and most variety of columns, which can assist slim in on the type of outliers you’re most inquisitive about.

The frequent merchandise units are then returned in a pandas dataframe with columns for the help and the listing of column values (within the type of the one-hot encoded columns, which point out each the unique column and worth).

To interpret the outcomes, we will first view the frequent_itemsets, proven subsequent. To incorporate the size of every FIS we add:

frequent_itemsets('size') =

frequent_itemsets('itemsets').apply(lambda x: len(x))

There are 24 FISs discovered, the longest masking three options. The next desk exhibits the primary ten rows, sorting by help.

We then loop by way of every frequent merchandise set and increment the rating for every row that comprises the frequent merchandise set by the help. This will optionally be adjusted to favor frequent merchandise units of larger lengths (with the concept a FIS with a help of, say 0.4 and masking 5 columns is, the whole lot else equal, extra related than an FIS with help of 0.4 masking, say, 2 columns), however for right here we merely use the quantity and help of the FISs in every row.

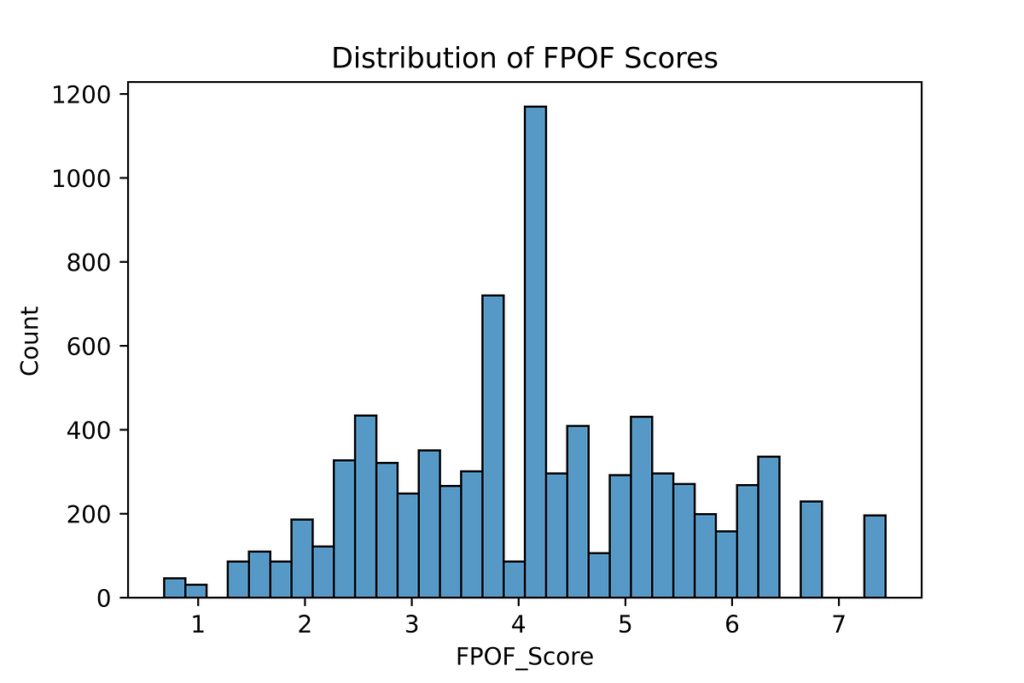

This truly produces a rating for normality and never outlierness, so once we normalize the scores to be between 0.0 and 1.0, we reverse the order. The rows with the best scores are actually the strongest outliers: the rows with the least and the least widespread frequent merchandise units.

Including the rating column to the unique dataframe and sorting by the rating, we see probably the most regular row:

We will see the values for this row match the FISs nicely. The worth for d_pref_o_attractive is (21–100), which is an FIS (with help 0.36); the values for d_pref_o_ambitious and d_pref_o_shared_interests are (0–15) and (0–15), which can also be an FIS (help 0.59). The opposite values additionally are likely to match FISs.

Probably the most uncommon row is proven subsequent. This matches not one of the recognized FISs.

Because the frequent merchandise units themselves are fairly intelligible, this methodology has the benefit of manufacturing moderately interpretable outcomes, although that is much less true the place many frequent merchandise units are used.

The interpretability could be decreased, as outliers are recognized not by containing FISs, however by not, which suggests explaining a row’s rating quantities to itemizing all of the FISs it doesn’t include. Nevertheless, it’s not strictly essential to listing all lacking FISs to clarify every outlier; itemizing a small set of the commonest FISs which can be lacking shall be enough to clarify outliers to an honest stage for many functions. Statistics concerning the the FISs which can be current and the the conventional numbers and frequencies of the FISs current in rows offers good context to check.

One variation on this methodology makes use of the rare, versus frequent, merchandise units, scoring every row by the quantity and rarity of every rare itemset they include. This will produce helpful outcomes as nicely, however is considerably extra computationally costly, as many extra merchandise units should be mined, and every row is examined towards many FISs. The ultimate scores could be extra interpretable, although, as they’re primarily based on the merchandise units discovered, not lacking, in every row.

Conclusions

Apart from the code right here, I’m not conscious of an implementation of FPOF in python, although there are some in R. The majority of the work with FPOF is in mining the FISs and there are quite a few python instruments for this, together with the mlxtend library used right here. The remaining code for FPOP, as seen above, is pretty easy.

Given the significance of interpretability in outlier detection, FPOF can fairly often be price attempting.

In future articles, we’ll go over another interpretable strategies for outlier detection as nicely.

All photographs are by creator

[ad_2]

Source link