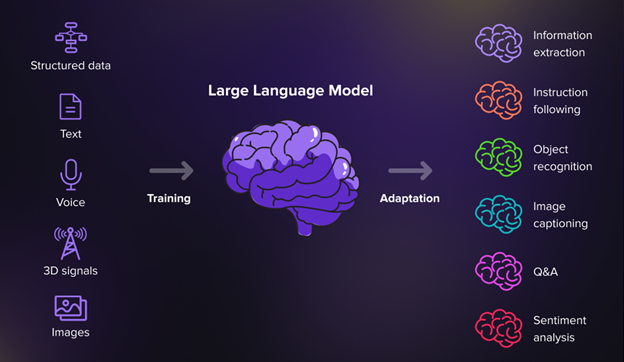

The newest Giant Language Fashions (LLM), though toddlers, are in some ways a lot smarter than the common human is—or will ever be. Not solely do they know extra, however they’ve a a lot larger capability to course of and act on huge arrays of directions.

We’ve recently explored the potential of LLMs to interpret or generate textual content contemplating quite a few parameters with extraordinary precision. We’ve got demonstrated their potential to guage unstructured textual content blocks and grade them alongside numerous scales to nice success. Moreover, we’ve got been capable of generate particular, tailor-made content material utilizing a fancy collection of directions that may be tuned with precision to the identical set of scales.

We’re getting into a brand new period of performance-based, data-driven content material the place we are able to rework LLMs into written content material manufacturing services, full with a management board of knobs and dials to numerically fine-tune optimum advertising content material throughout numerous codecs.

Right here, we share our preliminary experiment findings and supply a normal methodology to harness LLMs to extract quantitative measures from in any other case unstructured textual content, opening the door to statistical evaluation and optimization of content material creation.

Giant language fashions are completely suited to plot concepts

Giant language fashions are constructed utilizing mathematical vectors. This basis permits them to adeptly translate blocks of textual content—score, scoring, or mapping content material into numeric values that seize the distinct qualities of every block. For instance, we are able to ask the mannequin to guage how ‘technical’ this weblog is on a scale of 1-10, the place the boundaries are outlined by instance or by consumer instruction. Equally, LLMs can reverse this course to generate blocks of textual content that correspond to numeric scores of a sure attribute submitted by a consumer. For instance, you possibly can ask the LLM to write down a weblog submit with the diploma of technicality scoring 9 out of 10.

We will additively embody extra ideas to construct a conceptual house. That is what we time period a conceptual Cartesian house, which the LLM can confer with for content material era. We will plot some extent on this house to outline an thought primarily based on its place relative to every of the axes that outline our house.

Our experiments and findings

We performed a series of experiments to validate the effectiveness and adaptability of conceptual Cartesian mapping utilizing LLMs. We took a ‘ground-up’ strategy to validate our methodology, beginning with fundamental experiments and growing the complexity at every step.

Gradients experiment

This experiment explores the LLM’s potential to scale content material alongside linear gradients, offering customers with management in producing or evaluating textual content. We examined totally different scale ranges (1-10, 1-100) and demonstrated the mannequin’s adherence to particular scoring frameworks. Outcomes affirm the mannequin’s potential to methodically observe chosen gradients.

Various scoring strategies experiment

On this experiment, we examined the affect of different scoring strategies on textual content output. The LLM is instructed to use numerous scoring frameworks, showcasing the mannequin’s adaptability. It efficiently crafts responses primarily based on particular guidelines, indicating the customization potential of LLMs for various purposes. We even used a revered psychological framework to grade (or diagnose) empathy scores for a block of content material.

Multi-dimensional house experiment

This experiment delves into the mannequin’s efficiency in multi-dimensional areas. The research introduces ideas like practicality and technicality as further axes, illustrating the LLM’s potential to deal with advanced concepts and a number of dimensions successfully. The outcomes point out the mannequin’s agility in navigating intricate multi-dimensional areas.

Unspecified relative house experiment

This experiment explores the LLM’s functionality to quantitatively analyze concepts relative to different concepts, not gradients alongside a single axis. One important sensible utility for entrepreneurs is positioning content material relative to opponents; we acquired the LLM to generate housing coverage for a fictitious mayoral candidate that’s quantitatively positioned relative to a number of present candidates.

Our study demonstrated the mannequin’s potential to deal with open content material era duties with quantitative precision, showcasing its potential in environments the place concepts lack strict predefined frameworks.

Attaching normal efficiency metrics

If we bind the conceptual Cartesian place of content material with conventional metrics, we are able to analyze the efficiency of printed content material in opposition to newly accessible numeric values. For instance, we are able to research the social media posts (i.e., likes, shares, click on by way of charges) in opposition to conceptual scores assigned by the mannequin for attributes like humor, empathy, and technicality. By statistical evaluation, we are able to establish the optimum combine of every conceptual attribute for a given context and use that co-ordinate place to generate new content material for enhanced efficiency.

The revolutionary mixture of conceptual Cartesian mapping and LLMs offers us a brand new, methodical, exact strategy to normal content material creation.

Companies can tailor their messaging with precision, making certain most engagement with their target market while positioning their content material relative to opponents. Political campaigns can craft nuanced narratives relative to different candidates or polling outcomes. Instructional establishments can create custom-made studying supplies, enhancing pupil engagement and comprehension on the particular person stage.